What is preventing EU organizations from enabling Microsoft Copilot Cowork today?

The ever-returning discussion on Microsoft Enterprise Data Protection, and the Microsoft EU Data Boundary

Important note: this is not a legal interpretation. It is a practical, architecture-first explanation of how Microsoft’s contractual commitments (DPA, EDP), geographic commitments (EU Data Boundary), and model-provider choices (OpenAI, Anthropic, xAI) overlap, and where they do not.

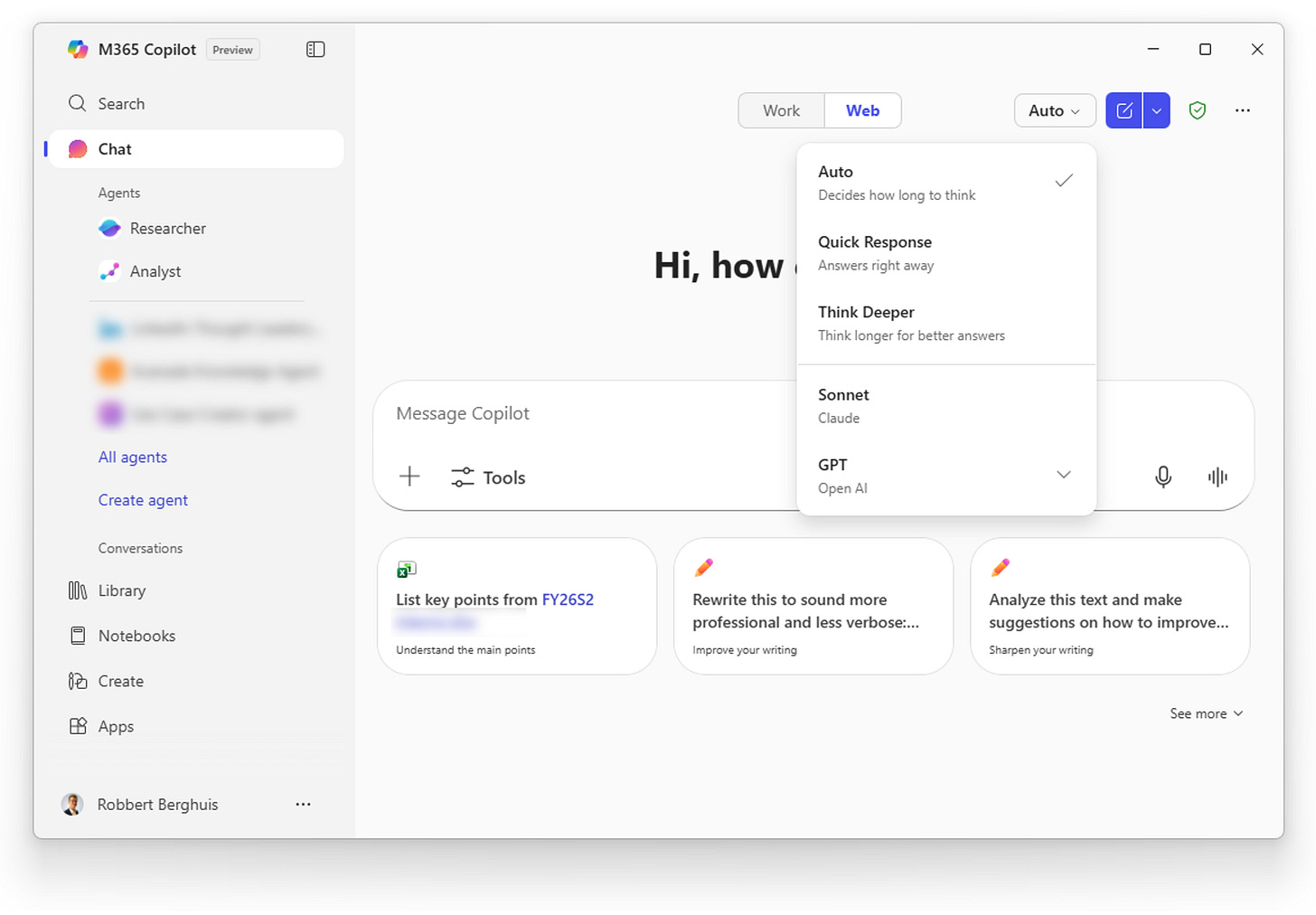

Microsoft just announced Microsoft Copilot Cowork, powered by Anthropics Claude Cowork. This means that Microsoft will (finally) enable multi-model in Microsoft 365 Copilot. Within Microsoft 365 Copilot this works great, and I really like that I can choose between models through this unified interface. This new feature, is sadly not yet available for EU organizations. To understand why that’s the case, we need to get a better understanding of the various agreements and commitments of Microsoft.

Two Microsoft promises. Two different questions.

Before we dive into the matter, this is quite frankly a moving target. By the time you’re reading this, it might not be up-to-date with the latest commitments.

Microsoft’s commitments

The Microsoft Products and Services Data Protection Addendum (DPA) and Enterprise Data Protection (EDP) are Microsoft’s contractual frameworks and commitments for how Microsoft processes customer data across Microsoft Online Services.

The DPA is Microsoft’s legally binding contract that defines what Microsoft is allowed to do with customer data, under which role, and with which enforceable privacy, security, and processing obligations.

The EDP is the Microsoft DPA enforced at runtime, and in the context of Microsoft 365 Copilot, it only applies as far as Microsoft controls the model and the processing path.

When users access Microsoft 365 Copilot using an Entra ID work account, Microsoft frames this as Enterprise Data Protection, with Copilot interactions governed under the same enterprise-grade terms you already rely on for Microsoft 365.

In practical terms, the Microsoft 365 Copilot commitments include:

Prompts and responses are not used to train foundation models for Microsoft 365 Copilot

Copilot respects your identity model and permissions, so it only surfaces data a user already has access to.

Microsoft explicitly states that Microsoft 365 Copilot interaction data (prompts and responses) is stored as Copilot activity history, and that admins can manage it using Microsoft Purview. The Purview integration is often overlooked but a key factor differentiating Microsoft 365 Copilot from Microsoft Copilot (consumer).

When using Microsoft 365 Copilot, the integration with Purview ensures:

Prompts and responses are discoverable via Purview eDiscovery

Retention policies apply to Copilot interaction data

Sensitivity labels and encryption are enforced

DLP policies can evaluate Copilot outputs

Audit logs capture Copilot activity

and many more…

In other words, through EDP Microsoft 365 Copilot becomes governable using the same control plane you already use for Microsoft 365. The same applies to Microsoft Copilot Studio. However, looking at the commitments for the consumer version of Microsoft Copilot, the near opposite is the case.

My advice: enforce end-users to always sign-in into Microsoft 365 Copilot (work context) when they use their company-managed devices, alongside proper training and awareness.

The EU Data Boundary

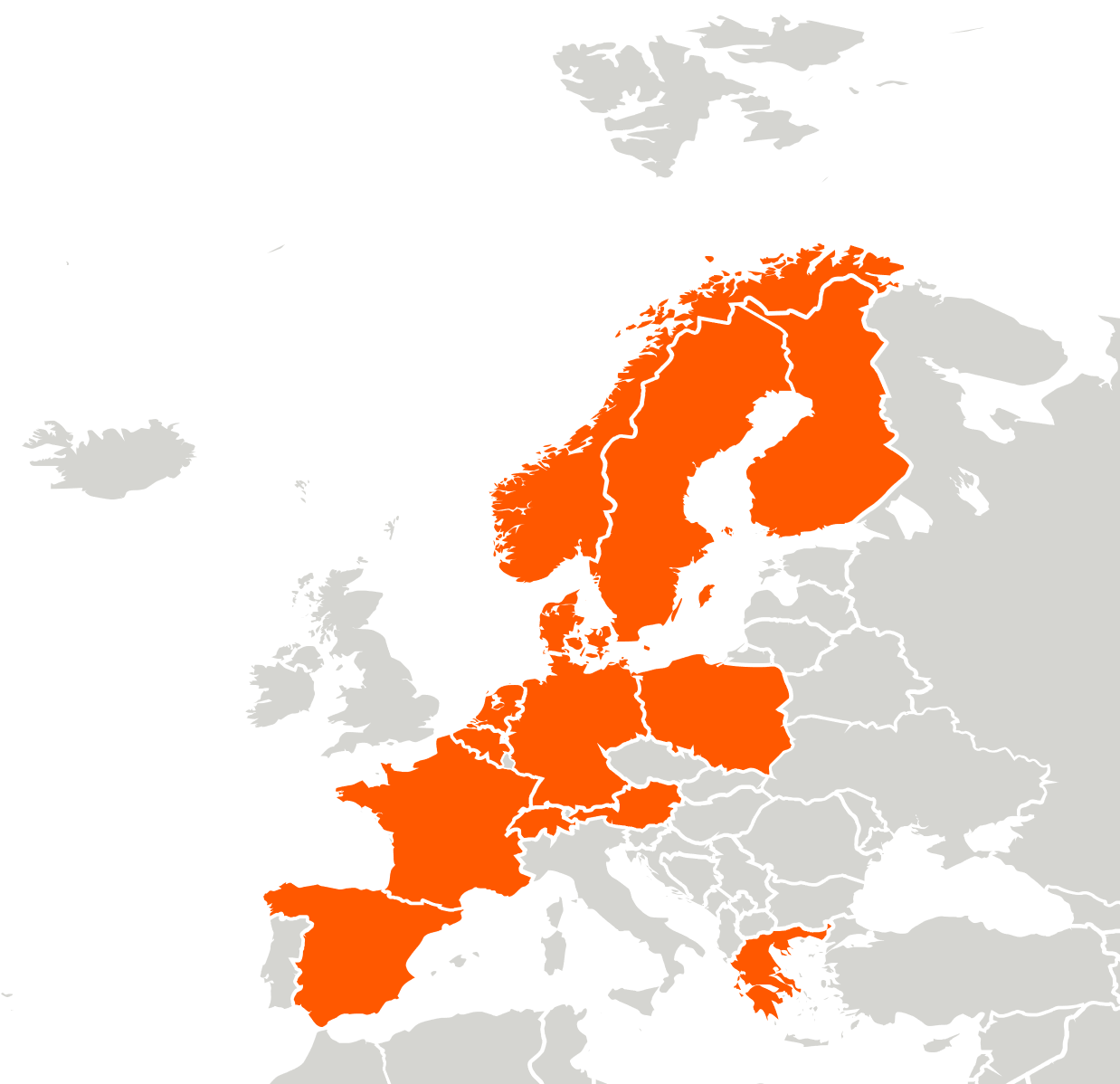

The EU Data Boundary for the Microsoft Cloud is Microsoft’s commitment to enable EU and EFTA customers to store and process covered data categories within the EU and EFTA region for core services. Microsoft positions the EU Data Boundary as applying across core cloud services including Microsoft 365 and most Azure services, and provides dedicated transparency documentation and updates.

For GenAI solutions like Microsoft 365 Copilot, “where processing happens” matters because inference is data processing. For Microsoft 365 Copilot, calls to the LLM are routed to nearby data centers but can use other regions during high utilization. For organizations subject to the EU Data Boundary, Microsoft ensure that the traffic / processing stays within that boundary through additional safeguards.

The below image is a representation of the Microsoft data centers located within the EU Data boundary. Microsoft publishes a detailed view here.

One AI surface, three very different model realities

Microsoft increasingly presents Copilot as a single, coherent experience.

Under the hood, however, not all models plugged into Copilot are governed in the same way. This distinction matters far more than most people realize. To understand why, it helps to look at OpenAI, Anthropic, and xAI side-by-side, not from a capability perspective, but from a governance and enterprise‑control perspective.

OpenAI ChatGPT

When people talk about “Copilot being compliant,” they are usually talking about OpenAI models running via Azure OpenAI. This is the baseline Copilot experience. What characterizes this model path:

Models are accessed via Azure OpenAI, not OpenAI’s public services

Processing happens inside Microsoft‑controlled infrastructure

Covered by Microsoft Product Terms and the Microsoft DPA

Explicit guarantee that customer data is not used to train foundation models

Fully integrated with Microsoft Purview (eDiscovery, retention, DLP, audit)

This combination allows Microsoft to make strong, end‑to‑end enterprise statements such as: “Your data stays within the Microsoft service boundary, is not used for training, and remains governable using existing M365 controls“. We’ll use this as a reference architecture to compare the others to.

Anthropic Claude

Microsoft introduced Anthropic models, and today they are part of the DPA and EDP when used within Microsoft Copilot Studio, but they are not part of the EU Data Boundary yet. Today, we can also use these models in Microsoft Foundry and Microsoft 365 Copilot chat. As such, these models sit in a more nuanced position. They are:

Integrated into Microsoft ecosystems (Copilot, Copilot Studio, Foundry)

Exposed through Microsoft tooling and billing

Positioned as “enterprise‑ready” from a capability perspective

But from a governance perspective, there is an important distinction.

Key characteristics:

Claude models are operated by Anthropic

Microsoft treats Anthropic as a subprocessor (see our previous article on this)

Anthropic models are out of scope for the EU Data Boundary and in‑country LLM processing commitments

Runtime and training guarantees are not identical to Azure OpenAI

As a result, Microsoft cannot automatically extend all of its standard data residency and processing guarantees This does not mean Claude is “unsafe” or “non‑enterprise”, but rather that Microsoft’s compliance posture changes when using Claude as the primary reasoning model.

xAI Grok

Microsoft also introduced xAI’s Grok model in Microsoft Copilot Studio. Similarly to when Antrophic was introduced, organizations need to explicitly opt-in with the terms of conditions of xAI. At the moment, Grok is the cleanest example of a model that is explicitly outside Microsoft’s standard enterprise guarantees. In plain language, when you want to connect with the xAI models you agree that:

xAI models are hosted by xAI outside of Microsoft.

If your organization chooses to use an xAI model, your data is processed outside Microsoft-managed environments and audit controls.

Therefore, Microsoft’s customer agreements, including Product Terms and the DPA, do not apply.

Microsoft’s data residency commitments and other enterprise assurances do not apply either.

Use is governed instead by xAI’s Terms of Service and Data Processing Addendum.

That is exactly why there is an explicit opt-in and consent pattern: it is a different legal and operational relationship. That does not mean you cannot use it - you can, but it sits outside Microsoft’s DPA, residency commitments, and audit controls. You are choosing a separate processor and separate terms.

The three realities

If you want to remember this, it helps to highlight boundaries:

OpenAI (Azure OpenAI) Microsoft controls the runtime, the boundary, and the guarantees

Anthropic (Claude) Microsoft integrates it, but does not fully control the boundary

Grok (xAI) Microsoft orchestrates access, but governance stays with the vendor

Same Copilot interface, three very different compliance realities.

Why Copilot Cowork is not yet available for (most) EU organizations

Microsoft describes Copilot Cowork as integrating Anthropic’s Claude family of models into Microsoft 365 Copilot experiences as part of a new “Wave 3” push. If Cowork depends on Claude for core functionality, then EU availability becomes constrained by two facts we already established:

Anthropic models in Microsoft 365 Copilot experiences are out of scope for EU Data Boundary commitments.

Claude via Microsoft Foundry preview runs on Anthropic infrastructure and is offered as “Global Standard”. Antrophic does mention a specific US Datazone “coming soon,” but has not made a statement on a EU datazone as far as I know.

When you do your Copilot rollout planning, treat “Copilot” as a platform and treat “models” as a dependency. Your compliance posture can change when the model router changes. Your governance needs to reflect that reality, not the marketing name.

Microsoft is clearly pursuing a multi-model strategy that includes Anthropic across Copilot surfaces. As excitement and fear-of-missing-out grows, it is highly likely that Claude will move toward more region-specific hosting options that better fit EU boundary expectations - either directly or through Azure OpenAI “hosting”. This would remove a major blocker for EU rollout of Claude-dependent experiences.

The bigger picture: Copilot as a governed gateway

Microsoft’s direction is increasingly clear: the value proposition is not a single model. It is the combination of:

enterprise identity,

enterprise data plane,

compliance boundary controls, and

the ability to route workloads to multiple models under one operational umbrella.

It’s not a feature play, it is a platform consolidation move, and it positions Microsoft as the gateway to GenAI for many enterprises. That strategy creates a powerful “gateway” position: one interface, one agent builder, one governance story on the Microsoft side. Microsoft Copilot Cowork is positioned as part of that push.