How to stop GenAI from processing what it shouldn't

Why Copilot needs runtime enforcement, not just policies on paper. A view on DLP enforcement to mitigate known control gaps in Copilot Chat and Copilot Agents.

This is part of a series of posts around Data Security and why AI is driving us to modify our approach. Previously, I wrote that the shift GenAI introduces is not technical, but behavioral. What used to be findable became askable: what used to require intent and effort is now one well‑phrased question away. We see that confidentiality drifts over time and through evolving sharing behavior, we seem to detach access from the intent. Copilot doesn’t create new data protection problems, but makes old ones impossible to ignore.

If you missed the previous posts, I highly recommend reading them:

Data and AI: why GenAI solutions turn old audit findings into today’s risk

Confidentiality drift: a hidden risk Copilot makes impossible to ignore

From access to execution

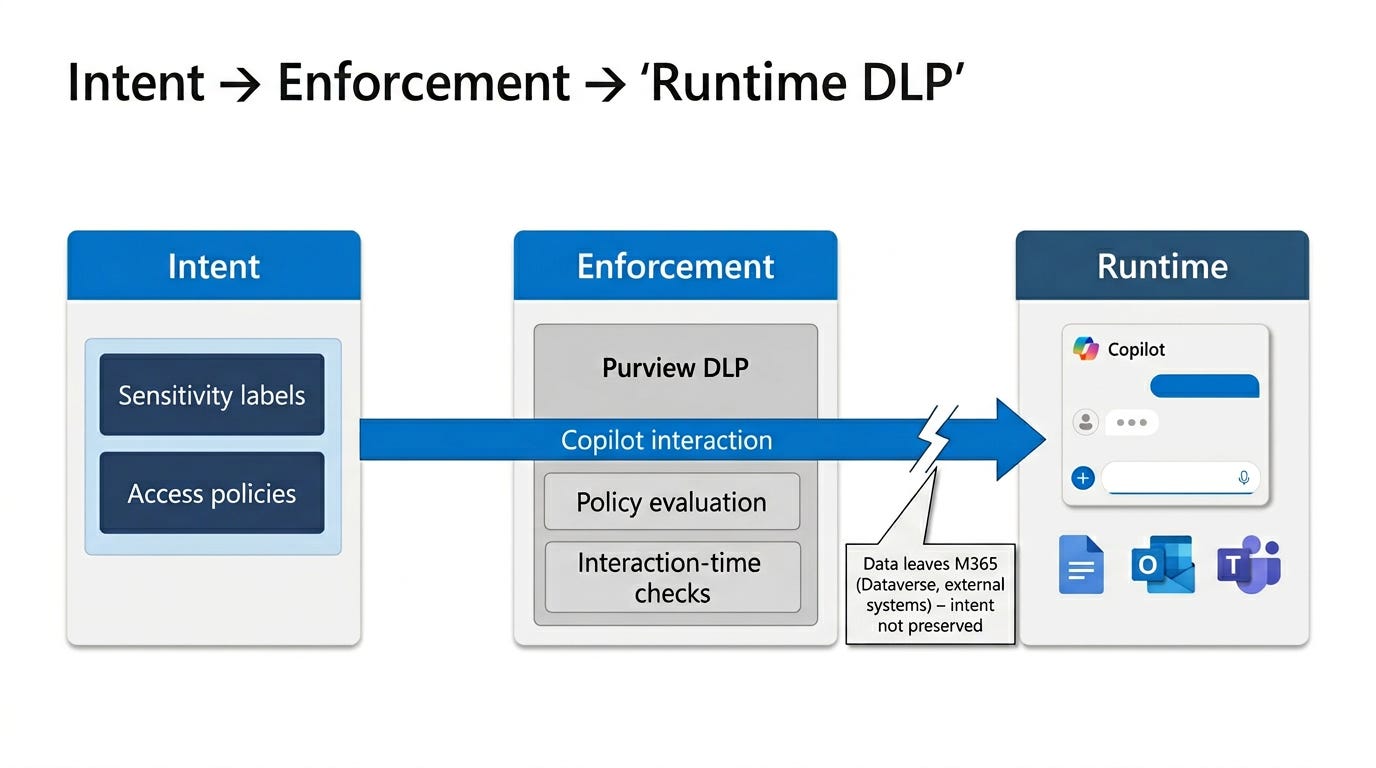

This is where everything starts to converge. This post is about one question: what actually stops it from processing data when it shouldn’t. The answer is not permissions, and not policies alone. It is whether intent survives all the way into runtime enforcement. Because once you accept that Copilot operates strictly within the reality you have built, the next question is no longer what it can access, but what actually stops it from processing what it shouldn’t.

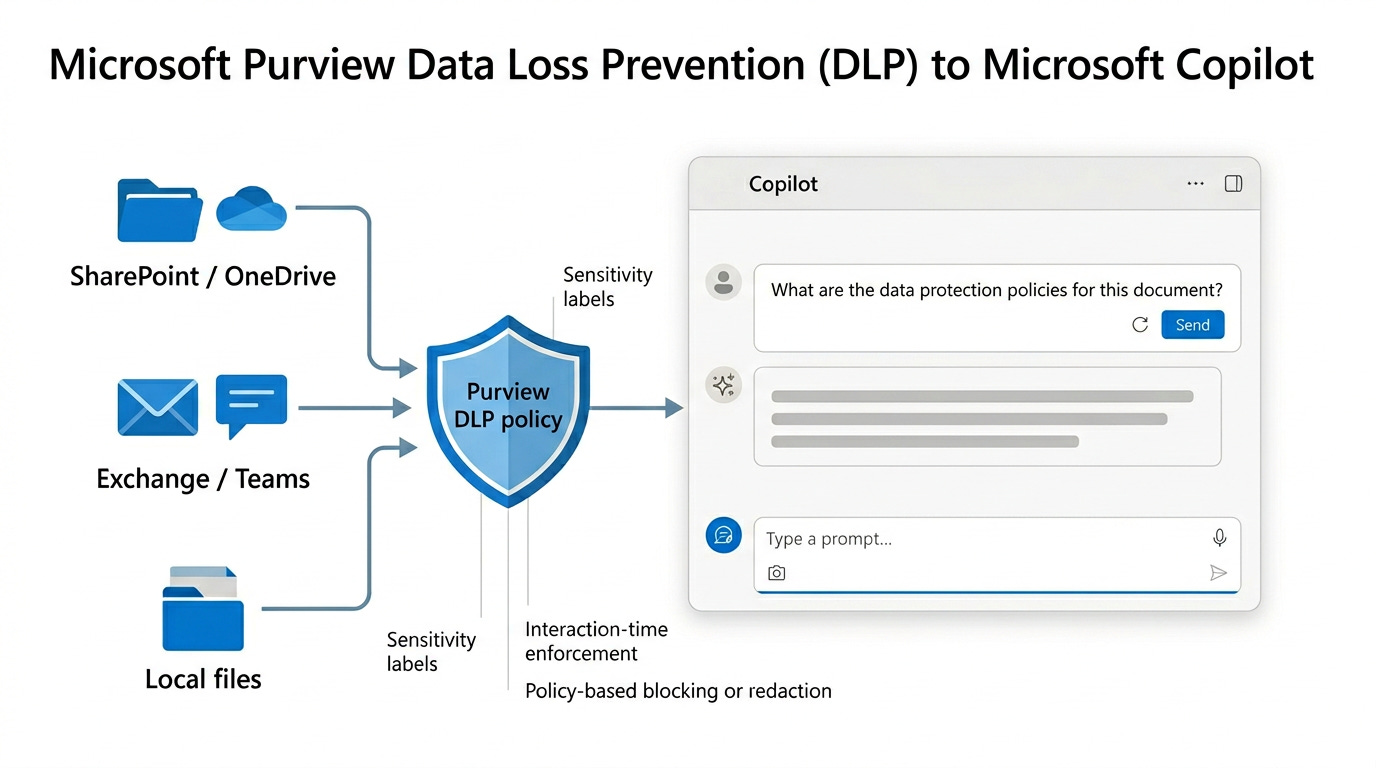

For those that are unaware, Microsoft Purview acts as the policy engine with classification and detection capabilities. All data ingested into Copilot, either directly in the prompt or through the used sources, is reviewed. Microsoft Copilot only uses the compliant content when formulating a response.

Policy does not create control

Copilot honors existing permissions and grounds via Microsoft Graph. That statement is technically correct, and deeply misleading if taken as reassurance. Permissions describe who may access something. They say very little about how that access should be used.

Most enterprise controls were designed for access decisions made by humans, at human speed. Copilot operates at interaction time, at machine speed. If you want consistency in that world, policy alone is not enough. You need enforcement the moment the interaction happens. That is where Data Loss Prevention stops being a compliance feature and becomes an architectural line of defense.

In traditional security models, the “policy ≠ control” distinction was tolerable because enforcement happened at human speed, through deliberate actions and visible workflows. AI changes that equation. Every interaction is an execution, every prompt a request for synthesis, not retrieval. In that world, permissions merely answer who may see something, not whether that access should be exercised in this context. Consistent outcomes at machine speed require controls that operate at the moment of interaction, not just rules defined in advance.

Where the model still works and where it starts to crack

Up to a point, the story is clean. Sensitivity labels express intent, DLP enforces that intent at interaction time and Copilot executes within those boundaries.

That model still works, but only while the data stays on a path where that intent survives. When data changes shape, storage location, or ownership model, something subtle happens. Nothing breaks. Nothing alerts. But the enforcement story changes, even if everything looks the same on the surface.

When agents enter the picture

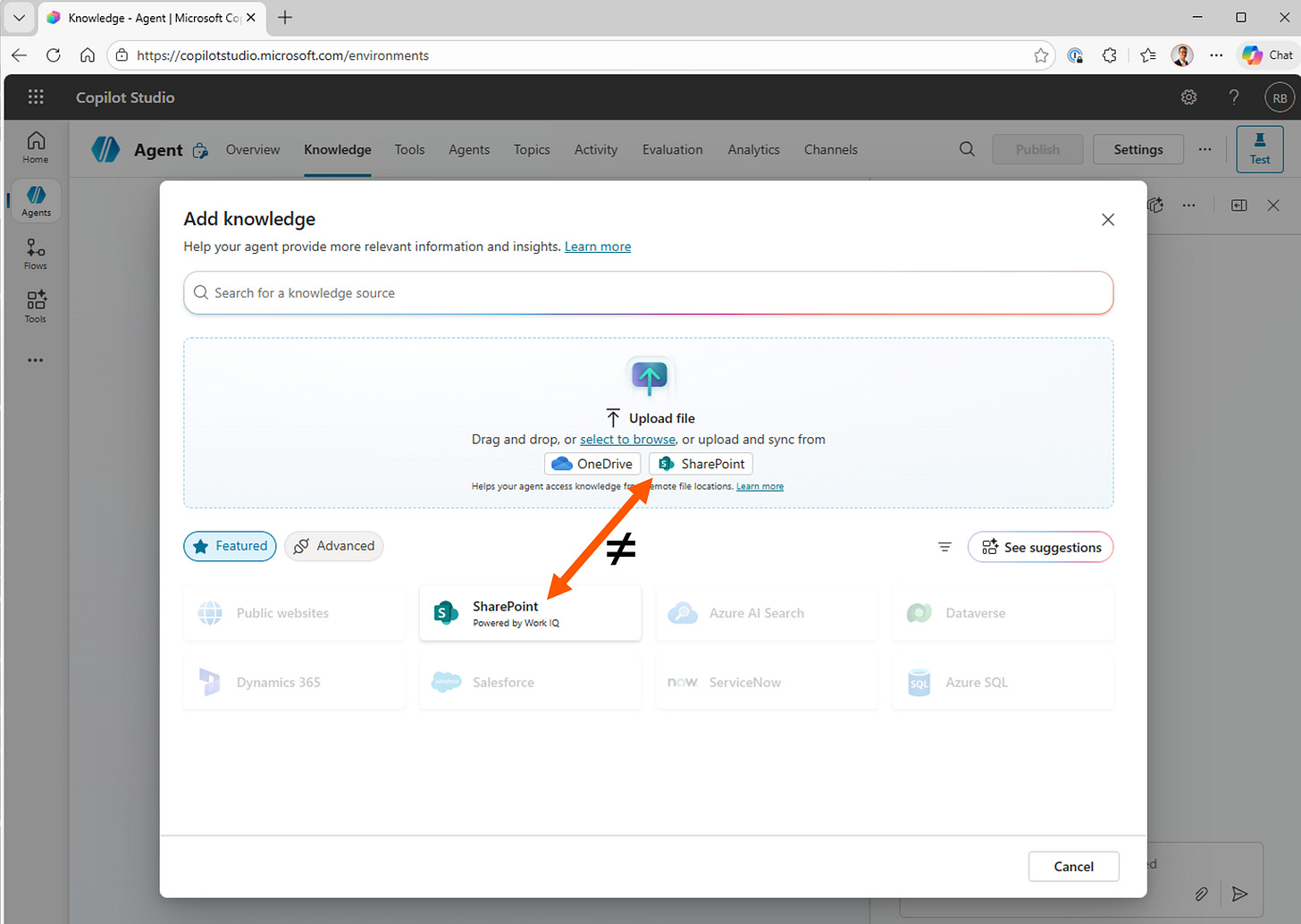

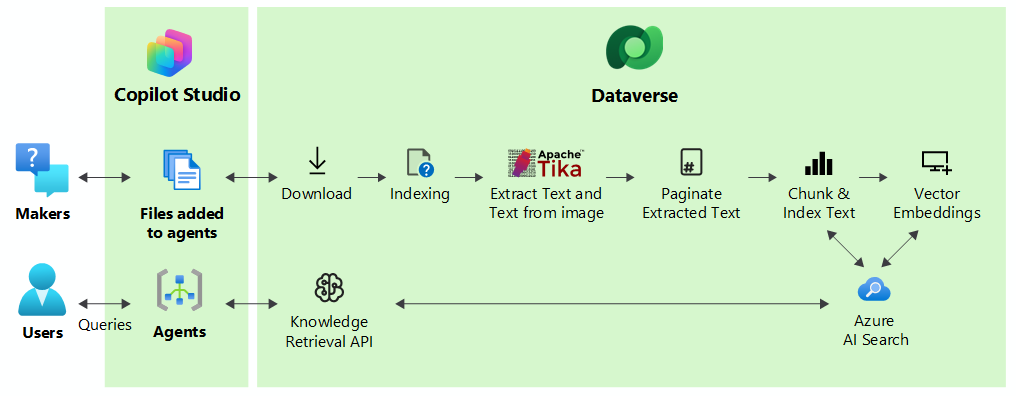

This is where the model still looks intact, but the enforcement assumptions quietly change. That fracture became impossible to ignore when you start looking more closely at Copilot Agents. On paper, agents feel like a natural extension, another Copilot surface, another way to work faster. But when you follow the data instead of the feature, the picture shifts.

Depending on how you add knowledge to your agent, the intent survives or not. Knowledge as an upload or by referencing are two wildly different approaches. When you upload data into a Copilot Agent as opposed to referencing it, it’s ingested into Dataverse. At that point, the original Microsoft Information Protection label no longer exists in a way that Purview DLP for Copilot can enforce.

This is not a bug. It’s an architectural reality. Dataverse has its own security model and governance strengths, but it is not a transparent continuation of your Microsoft 365 classification model. The intent that labeling expressed does not automatically survive that jump.

That is the governance gap, often invisible, because upload feels harmless.

My guidance: Treat agent data upload as a governance decision, not a convenience. If data needs a label to exist safely in Microsoft 365, require an explicit decision before onboarding it into a Dataverse‑backed agent. If you can’t explain who re‑establishes intent there, you are not enforcing policy. You are hoping.

When Copilot says “no”, whose voice is that?

If you follow so far, I might break your mind with this one as there are more patterns that can surface. Sometimes Copilot refuses to answer, withholds content, or produces a cautious reply. From the outside, that might look like DLP. But underneath, it’s often something else.

Copilot’s guardrails, prompt‑injection defenses, and harmful‑content filters protect the platform, not your policy. When they intervene, they do so under Microsoft’s authority, not yours. The outcome may look identical to a DLP block, but the source of truth is not.

My guidance: Never treat Copilot refusals as evidence of governance. If you can’t trace that refusal to your own policy (logged and auditable) it isn’t part of your control model. Make that distinction explicit when explaining Copilot behavior to risk, legal, or audit teams.

When Copilot refuses, it’s important to understand that not all “no” responses are equal. Different enforcement mechanisms can produce similar outcomes, while originating from entirely different authorities. Treating them as interchangeable creates false confidence. For governance to hold, you need to be able to distinguish which system is speaking, and under whose intent that refusal is enforced. When you interact with your Risk, Audit and Legal teams it might be helpful to make this distinction really explicit:

Your company’s policy: Auditable, intentional, and enforceable under your governance model

Platform safety controls (Microsoft or other vendors): Designed to protect the service, not to express your organizational intent

Model-level guardrails: Probabilistic and opaque, with outcomes that may resemble policy enforcement but are not accountable to it

Where interaction‑time enforcement now lives

The recent update to the Office augmentation loop was a quiet milestone.

Previously, DLP for Copilot relied on cloud context: SharePoint Online, OneDrive for Business, any file Microsoft Graph could reason about. Local files lived outside that story.

Now, Office clients pass sensitivity labels directly into the augmentation loop. Enforcement finally happens at the point of interaction, regardless of file location. This doesn’t make Copilot smarter, but it makes your intent harder to bypass.

That update highlights a contrast: local files keep label‑based enforcement; agent uploads do not. That asymmetry shows where intent is still technically anchored and where it quietly disappears.

My guidance: Anchor your Copilot control story on interaction‑time enforcement, not storage location. Map where labels survive into runtime and where they don’t. If your controls depend on where the file happens to live, your model will not scale.

What agents changed

The more I looked at agents, the clearer it became that they don’t reduce risk - they multiply surfaces of interaction. Every agent is a new execution context, every upload is a silent approval and every contributor becomes a de facto data owner. The key governance question shifts: not “Is this data confidential?”, but “Should this data exist in an AI execution context at all?”

If you don’t make that decision explicitly, every upload becomes a silent approval mechanism. One that favors optimism over caution.

My guidance: Treat agents as products with operating models, not as enhanced chat. Decide who can add knowledge, under which conditions, and with what review expectations. If everyone can feed an agent, classification decisions have already left your hands.

Closing thoughts

Across all four posts, one principle keeps proving itself: Copilot doesn’t violate your controls. It executes them at machine speed.

When intent is unclear, outcomes feel unpredictable. When enforcement is inconsistent, Copilot exposes it instantly. When intent disappears along the data path, governance doesn’t weaken, it collapses.

Stopping GenAI from processing what it shouldn’t is not about slowing AI down. It’s about being clear where intent originates, where it’s enforced, and where it fades. Copilot makes those boundaries visible. Ignoring them won’t make them go away.

By now we’ve explored the controls from a Confidentiality perspective, aimed to secure sensitive data and apply relevant controls. There’s something equally required, and for that you need to ask a different kind of question. Not “Can Copilot access this?” but “Should this data still exist in a form Copilot can even find it?”.

Once visibility extends into runtime, data lifecycle problems surface too. Copilot doesn’t just expose sensitive data, it also surfaces the stale, duplicated, and forgotten. That has nothing to do with AI, and everything to do with data hygiene. I’ll end with this teaser, subscribe to stay tuned for the next post in this series!